Artificial Intelligence (AI) is changing cyber security. It is enhancing the capabilities of bad actors to perform more sophisticated attacks while empowering cyber security professionals to elevate their defenses. The question everyone wants to know is, "Will AI replace cyber security jobs in the future?"

AI has been at the heart of many new debates in cyber security. Is it safe? Is it helping the good guys or the bad guys? Is it going to change how we do cyber security? These are all important questions to consider. However, if you're working in cyber security or looking to break into the industry, you want to know if AI will affect your job.

This article looks at what modern AI is, how it relates to the current cyber security job market, and how it could affect your future cyber career. It finishes with actionable advice that will help you best prepare for the world of tomorrow.

Let's jump in and answer, "will AI take over cyber security?"

- What Is Modern AI?

- Cyber Security Job Market and Career Gap

- Evolving Role of AI in Cyber Security

- Real-World AI Impact: Case Studies

- How AI is Reshaping Cyber Security Careers

- AI Governance, Risk, and Compliance: A New Career Frontier

- Preparing for the Future

- Conclusion - Will AI Take Over Cyber Security?

- Frequently Asked Questions

What Is Modern AI?

Before exploring how artificial intelligence (AI) affects cyber security, let's first look at modern AI and its advantages.

Modern AI refers to the development of computer systems that are capable of performing tasks that would usually require human expertise or intelligence to perform. To do this, algorithms are created that train Large Language Models (LLMs) using large datasets (big data) so that they can be used to make accurate decisions when prompted.

The LLMs consume information and create artificial neural networks to link related topics. This repeated consumption of data and training refines the model and leads to the AI algorithm becoming capable of making an "intelligent" decision based on user input. This process is known as machine learning (or deep learning) and has been used for image recognition, natural language processing, and reinforcement learning in areas like robotics and video games.

In recent years, AI and LLMs have reached the point where they can be integrated with complex systems to automate some data analysis tasks. This was made popular by ChatGPT, but there has been an influx of AI-powered tools that have hit the market, from AI image generation tools like MidJourney to domain-specific tools such as Github Copilot. The current state of AI is impressive, but it has limitations.

Advantages AI Offers:

- Provides access to a vast knowledge base immediately.

- It is more efficient and intuitive to use than regular search engines.

- Can augment the workflow of professionals to increase their efficiency and throughput.

- Reliable copilot for task execution.

- Automates the protection of systems.

Current Limitations of AI:

- Lacks human judgment and intuition.

- Relies on a high quantity of quality data to train and avoid bias.

- Needs humans to validate, enhance, and train with domain-specific knowledge.

- Concerns over the security and privacy of data uploaded to AI systems.

Cyber Security Job Market and Career Gap

Now that you know a bit about AI and its advantages to the modern workplace, let's look at the current cyber security job market to understand how AI may affect your career.

There is currently a high demand for skilled cyber professionals in the job market. According to the Cybersecurity Talent & Workforce Shortage Stats 2025 report, the global cyber security workforce gap stands at 4.8 million unfilled positions.

While this represents a slight increase from 2024, the growth rate has slowed considerably compared to the rapid expansion seen between 2020 and 2023. Some regions are even showing a slight narrowing of the gap due to AI automation and shifting economic conditions.

The surge in cybercrime is adding urgency to this shortage. Cybercrime has already hit the $10.5 trillion annual cost projected years ago, and new forecasts put the global impact at $15-$20 trillion by 2030.

As more businesses move online and remote, attackers gain more targets, while defenders face exploding volumes of network traffic and faster-moving attack chains.

This compounds the problem: the talent shortage leaves roles unfilled, and the skills organizations need keep evolving. To cope, companies are accelerating AI integration in security: using AI for initial threat detection, alert triage, and anomaly spotting across massive telemetry. But AI doesn’t just reduce workloads; it also changes them.

Routine entry-level tasks are being automated, while new roles emerge around building, tuning, and governing AI systems, including oversight of AI ethics (bias, transparency, accountability) and safe deployment. The transformation isn’t theoretical anymore: AI is simultaneously widening the threat landscape and becoming the toolsets teams rely on to keep up.

FREE Cyber Security Career Guide

Thinking of a career in cyber security? Our Cyber Security Career Guide walks you through the industry landscape, skill-paths, certifications, and realistic timelines to become job-ready.

Evolving Role of AI in Cyber Security

With AI now embedded on both sides of the battlefield, a big question hangs over the industry: will AI take over cyber security? The short answer is no - not in the sense of replacing security teams, but it is taking over more of the repetitive detection and triage work. That shift is exactly why the field is changing so fast, and why organizations are racing to adopt AI while also preparing for AI-powered attacks.

So what role does AI play in relation to cyber security? Well, both attackers and defenders are using AI right now to perform sophisticated attacks against systems and to augment the analysis of cyber incidents. Let's explore how AI is currently used for good and bad in the cyber security industry.

AI for Good

AI has been empowering security teams as a guardian to protect systems from advanced cyber threats by leveraging the power of rapid big data analysis for threat detection. It is also being used as a reliable co-pilot for task execution to increase the efficiency of professionals.

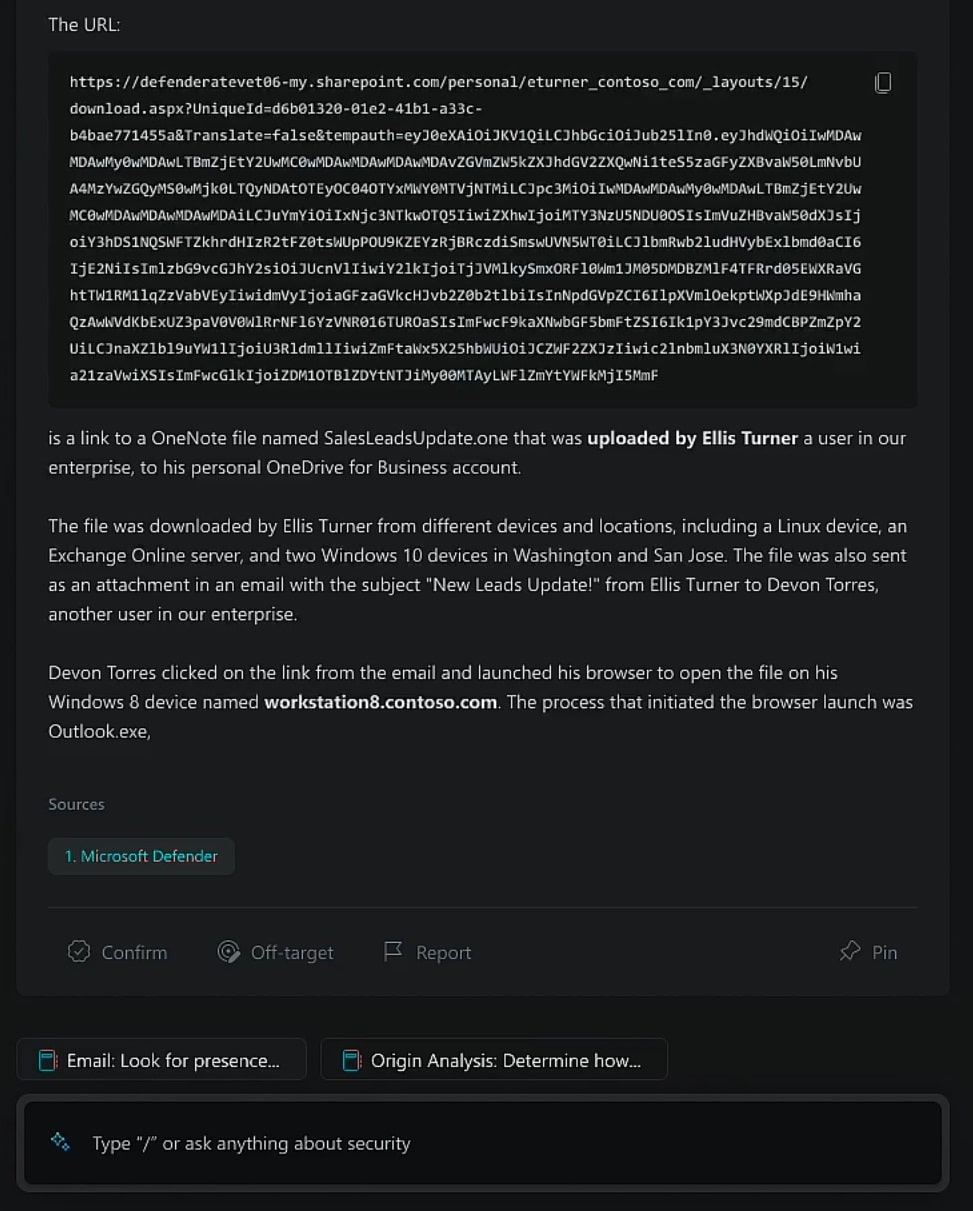

A notable example of this is Microsoft Security Copilot, which security teams have used as an all-in-one virtual assistant that uses the power of AI to augment workflows. As of 2025, Security Copilot has matured significantly, offering enhanced capabilities for SOC analysts to streamline investigations, improve the quality of detections by reducing false positives, and provide support for security operations.

Modern Defensive AI Tools:

- Microsoft Security Copilot

- CrowdStrike Charlotte AI

- Palo Alto Precision AI

- SentinelOne Singularity with GenAI features

- Wiz AI Security Posture Management (AI-SPM)

- Snowflake Cortex AI

AI has also been integrated with Endpoint Detection and Response (EDR) platforms, Security Information and Event Management (SIEM) platforms, and malware analysis tools across the industry.

Cyber security domains AI-powered tools are being used in:

- Incident Response

- Threat Intelligence and Threat Hunting

- Compliance Monitoring

- Vulnerability Management and Third Party Risk Management

- Threat Detection and Detection Engineering

- Malware Analysis and Reverse Engineering

- Open Source Intelligence (OSINT)

- User and Entity Behavior Analytics (UEBA)

AI for Bad

That said, AI is not just a tool for good. Malicious actors and cybercriminals have been using AI to scale attacks, automate reconnaissance, and evade controls - from generating malicious software variants to spinning up waves of AI-generated phishing emails. These campaigns can rotate malicious IP addresses rapidly and use AI for identifying patterns in victim behavior, making targeting sharper and harder to block.

In parallel, attackers are automating routine tasks (like scanning, credential stuffing, and exploit chaining), so what once took teams days can now happen in minutes, accelerating both reach and impact.

An example of this is phishing attacks, which are the most common form of cyber crime and are on the rise. Attackers are using ChatGPT and other natural language processing tools to craft emails and text messages that trick victims into revealing sensitive information or providing them access to corporate systems. AI can make these attacks more convincing through generative content creation that attackers can use to manipulate human emotions.

Modern Offensive AI Tools:

- Claude 3 and Claude Code for automated reconnaissance

- Grok for agentic workflows

- Gemini Advanced

- GPT-4o and GPT-5 Red-Team Agents

- Automated exploit generation assistants

- AI-powered phishing and deepfake creation tools

Attackers are already using AI to create far more complex threats, especially in social engineering. We’re seeing AI-generated voice clones that imitate loved ones to pressure victims into paying ransom, and as the tech improves, these deepfakes will become more convincing, more common, and harder to spot.

A striking example is the UK engineering group Arup, which reportedly lost HK$200 million (about $25 million) after criminals used AI-generated video and audio to impersonate a senior manager on a video call, triggering fraudulent financial transfers.

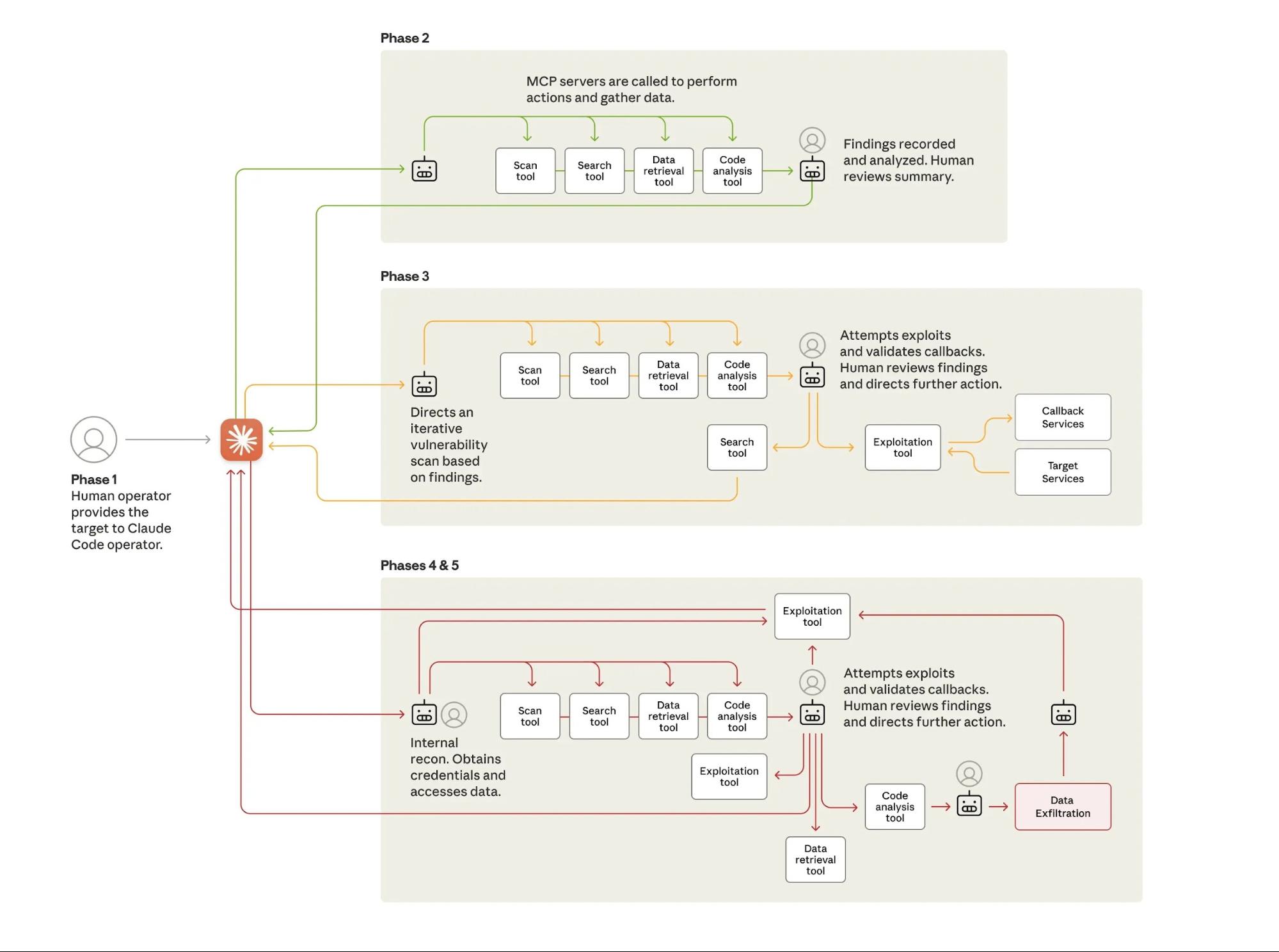

These AI-powered social engineering tactics represent just one facet of the threat. Advanced attackers are now integrating AI throughout their entire operation, using tools like Claude Code and the Model Context Protocol to automate reconnaissance, exploitation, and lateral movement while retaining human control over strategic decisions.

To keep up with these threats, organizations need to move beyond basic threat detection that looks only for known signatures or obvious phishing cues. AI-driven scams can slip past traditional filters, increasing the risk of costly data breaches and fraudulent transfers. That’s why many existing security teams are turning to enhanced threat detection - using AI to spot subtle anomalies in behavior, language, login patterns, and transaction flows, and to correlate weak signals across high volumes of security alerts.

AI is Now Both a Weapon and a Shield

The dual nature of AI in cybersecurity has never been more apparent. Defenders leverage tools like CrowdStrike Charlotte AI and Microsoft Security Copilot to automate threat detection and response.

Meanwhile, adversaries use Claude Code, GPT-4o, GPT-5, and Gemini Advanced to automate reconnaissance, generate exploits, and craft convincing social engineering campaigns.

The cyber security arms race has entered a new era where both sides wield the same foundational technology.

Real-World AI Impact: Case Studies

The theoretical discussions about AI's role in cybersecurity have given way to concrete examples. Here are three significant incidents that demonstrate how AI is reshaping the field right now.

State-Sponsored Attack Using Claude Code (November 2025)

In November 2025, Anthropic revealed that a Chinese state-sponsored group had used Claude Code to automate a significant portion of an intrusion attempt. The AI system performed reconnaissance, exploit generation, and lateral movement preparation, automating an estimated 80-90% of the attack workflow. While the operation still required human oversight and decision-making, the AI drastically reduced the attacker workload and accelerated the timeline of the campaign.

This incident marked a turning point in understanding adversarial AI capabilities. It demonstrated that sophisticated threat actors are not just experimenting with AI tools—they are actively deploying them in real-world operations.

CrowdStrike Layoffs Tied to AI Efficiencies (May 2025)

In May 2025, CrowdStrike announced the elimination of approximately 500 positions, representing roughly 5% of its workforce. In a memo included in a securities filing, CEO George Kurtz stated that "AI is flattening our hiring curve" and acknowledged that "AI is reshaping every industry," including cyber security. The cuts were confirmed through an SEC 8-K filing and covered extensively by major business outlets.

The layoffs were tied to AI-driven automation that made certain operational roles redundant. However, the company emphasized it would continue hiring in product engineering and customer-facing roles. This represents a restructuring rather than a wholesale elimination of cyber security jobs, but it signals a clear trend: AI is changing the composition of security teams.

Tier-1 SOC Automation Becomes Standard Practice

By 2025, AI automation of Tier-1 SOC functions has become standard practice across the industry. AI systems now handle alert triage, enrichment, and ticket drafting—tasks that once consumed the majority of junior analyst time. Many organizations have responded by merging Tier-1 and Tier-2 responsibilities, effectively eliminating the traditional entry-level SOC analyst role.

According to Darktrace's 2025 Report, The State of Al Cybersecurity, 63% of security stakeholders believe their existing cybersecurity stack solely or mostly leverages generative AI, altering their workflows.

Security teams are already seeing AI reshape day-to-day workflows. In incident response, for example, AI can automatically trigger containment steps during an active attack, reducing triage time and giving analysts space to investigate and remediate properly. It’s also changing how teams prepare: AI-driven breach and attack simulations run faster, more frequently, and with broader coverage, improving testing cycles and overall readiness.

How AI is Reshaping Cyber Security Careers

The changes AI brings to cyber security careers are no longer hypothetical. They are measurable, documented, and accelerating.

Traditional Entry-Level Roles Are Declining

The traditional pathway into cybersecurity, starting as a Tier-1 SOC analyst monitoring alerts and escalating incidents, is rapidly disappearing. AI automates most repetitive monitoring tasks, and organizations increasingly expect even junior analysts to work alongside AI tools rather than perform purely manual analysis.

However, this does not mean early-career opportunities have vanished. Instead, they have evolved. New entry points into the field include:

- AI SOC Workflow Operator

- AI Security Analyst Assistant

- AI Governance Coordinator

- Adversarial Testing Junior

- Prompt Security Engineer (an emerging junior-level role)

These roles require a different skill set than traditional positions. They emphasize AI literacy, prompt engineering for security contexts, and the ability to evaluate AI-generated outputs for accuracy and security implications.

AI Will Improve Efficiency

AI is a transformative technology that has already improved the speed at which cyber professionals can perform tasks. It helps triage and respond to emerging threats faster and more effectively using insights gleaned from big data analysis. This has reduced the manpower required to perform certain business functions and cut company costs while increasing productivity.

But efficiency gains come with trade-offs: AI can scan massive streams of alerts and surface potential threats in seconds, but its conclusions are only as good as its training data. Human analysts still have to apply strategic thinking, ensure data security isn’t compromised by false positives or blind spots, and step in for nuanced, high-stakes calls that require context and creative problem solving beyond what the model has seen.

AI Will Be Used as a Tool by Attackers

AI is just a tool. It can be used to defend systems, but it can also be used to attack systems. Cybercriminals and malicious actors are using AI to perform more sophisticated, large-scale attacks against organizations. High-profile attackers leverage AI to perform attacks that would not have been possible for human operators alone. Less-skilled attackers use AI to enhance their capabilities and target more mature organizations.

The democratization of AI tools means that attack sophistication is no longer limited to well-resourced threat actors. A moderately skilled attacker with access to Claude Code or GPT-4o can now perform reconnaissance and exploit development at a level that previously required specialized expertise.

AI Will Still Need Human Interaction

AI is still very much in active development. It has not reached the point where it can fully mimic the intelligence of a human being, nor our creativity, and will still require some level of human expertise and interaction for the foreseeable future. This means cyber professionals will need to learn new skills in areas such as machine learning, data analysis, and AI-driven security solutions to stay ahead as the demand for AI increases.

More importantly, AI lacks accountability. When an AI system makes a mistake—approving a malicious file, missing a critical alert, or generating an incorrect recommendation—a human must take responsibility for the outcome. This fundamental requirement for human oversight ensures that cybersecurity will remain a human-driven field, even as AI handles more of the technical workload.

AI Will Lead to New Types of Cyber Security Jobs

AI has already lessened the number of traditional entry-level jobs and narrowed the skill gap that existed in the cyber security industry. However, it requires humans to develop, train, implement, maintain, and secure AI. This has led to new types of cyber security jobs, such as AI security analysts and machine learning security engineers, as AI becomes more prevalent in cyber security.

The rise of AI governance and regulatory frameworks has created entirely new career paths that did not exist just a few years ago.

AI Governance, Risk, and Compliance: A New Career Frontier

One of the most significant developments in the AI and cyber security landscape has been the emergence of formal governance and regulatory frameworks. These regulations have created a new category of cyber security careers focused specifically on AI systems.

Regulatory Landscape

Several major frameworks have been established or are in active enforcement:

EU AI Act - The EU’s AI Act is the first major comprehensive AI regulation. It uses a risk-based model (unacceptable, high, limited, minimal risk) and sets strict requirements for high-risk systems, with phased implementation and some high-risk rules now expected to apply in 2027.

NIST AI RMF - Released in 2023. NIST’s voluntary AI Risk Management Framework provides a structured way to map, measure, manage, and govern AI risks. It’s increasingly used as a reference for enterprise and government AI governance, with a GenAI Profile added in 2024.

ISO/IEC 42001 - This is the first international standard for AI management systems. It gives organizations requirements for governing AI responsibly across the lifecycle, including risk, transparency, security, privacy, and ethical use.

New Career Roles Created by AI Governance

These regulatory frameworks have driven demand for specialized roles that blend cyber security expertise with AI literacy and compliance knowledge:

AI Governance Specialist - Responsible for ensuring an organization's AI systems comply with relevant regulations and internal policies. This role involves creating governance frameworks, conducting AI system audits, and liaising between technical teams and legal/compliance departments.

AI Risk and Compliance Officer - Focuses specifically on identifying and mitigating risks associated with AI deployment, including security vulnerabilities, privacy concerns, and potential for misuse. This role often reports directly to the CISO or Chief Risk Officer.

AI Safety and Security Engineer - A technical role focused on implementing security controls for AI systems, testing AI models for vulnerabilities, and ensuring AI systems cannot be manipulated by adversaries. This includes adversarial machine learning, prompt injection defense, and model poisoning prevention.

These roles represent genuine career growth opportunities in cyber security. They require a combination of traditional security knowledge and emerging AI expertise, making them ideal for cyber security professionals looking to future-proof their careers.

Preparing for the Future

With the inevitable collaboration between AI and cyber security, how is it best to prepare for this new and exciting future? Let's look at some actionable steps you can make right now.

Embrace AI and Look for Opportunities to Use It

AI is here to stay. It offers huge advantages for you as a cyber security professional, and you should try to make the most of these advantages at every opportunity you get so you can stay ahead of the game. This could be using AI to help you learn new cyber security concepts, perform research, or even create a new cyber security tool. You can then discuss using AI during a job interview to impress future employers.

The professionals who treat AI as a strategic partner rather than a threat will be the ones who advance their careers most rapidly.

Stay Up to Date on the Latest AI Trends and Technologies

AI is evolving fast. New LLMs and AI systems are being developed and refined every day. Meanwhile, companies are trying to integrate AI with their existing products or create new ones. To prepare for the future, you need to stay updated with the latest AI trends and technologies so you know how AI is being used and where it is likely going.

This will allow you to prepare for future job opportunities, learn new and exciting AI tools that will empower you in your day-to-day work, and develop the skills necessary to tackle the future challenges AI will pose. One way to keep updated with the latest AI trends and technologies is by following the StationX blog, where industry professionals give insights and guidance on all things cyber.

Expand Your Skillset

To have a long and fulfilling cyber security career, you must always try to expand your skill set. Cyber security is a demanding industry that rapidly evolves to keep up with new trends and technologies. This means you must become a lifelong learner and continuously expand your skillset. When it comes to AI, this is no exception.

Essential Skills:

AI Literacy - Understanding how AI systems work, their capabilities, and their limitations is no longer optional. According to LinkedIn's 2025 Skills Report, AI literacy is the fastest-rising skill globally, and cybersecurity-specific job postings consistently list AI competency as a top requirement.

Prompt Engineering for Security - The ability to craft effective prompts for AI systems in security contexts is becoming a specialized skill. This includes knowing how to get useful threat intelligence from AI tools, how to use AI for code review, and how to detect when AI outputs contain errors or hallucinations.

SOAR Automation and Scripting - Security Orchestration, Automation, and Response (SOAR) platforms are increasingly integrated with AI. Python remains highly relevant for writing automation scripts and customizing AI-driven workflows.

Dataset Evaluation and Log Correlation for AI Workflows - Understanding how to prepare data for AI analysis and how to interpret AI-generated insights requires knowledge of data quality, normalization, and correlation techniques.

Adversarial Machine Learning - As attackers use AI, defenders must understand how AI systems can be attacked. This includes model poisoning, adversarial examples, and prompt injection attacks.

AI Model Evaluation and Guardrail Testing - The ability to test AI systems for security vulnerabilities, evaluate their outputs for accuracy, and implement appropriate guardrails is becoming a core security skill.

Knowledge of AI Regulations - Familiarity with the EU AI Act, NIST AI RMF, and ISO/IEC 42001 is increasingly important, especially for roles in governance, compliance, and risk management.

To learn about the skills required to become a cyber security professional, give Top Cyber Security Skills You Need for an Exciting Career a read.

Conclusion - Will AI Take Over Cyber Security?

AI is not going to replace cyber security professionals. However, it will replace many routine tasks, which means the people who learn to work with AI-powered systems will pull ahead. AI is reshaping roles, compressing some job ladders, and expanding others. The CrowdStrike layoffs, the Claude Code attack, and the widespread automation of Tier-1 SOC functions demonstrate that AI's impact on cyber security careers is real and measurable.

Artificial intelligence can automate detection and triage, surface AI flags faster than humans, and strengthen security measures through more proactive defense. Still, those outputs need human analysts to validate context, handle edge cases, and make strategic decision making calls during real incidents.

Cyber security remains human-led because judgment, accountability, and navigating ethical and privacy concerns can’t be outsourced to models. The future belongs to teams that pair AI speed with human expertise: AI handles scale and signals, people interpret impact and decide what to do next.

You can keep up-to-date by following StationX blog for cutting-edge insights and knowledge or by signing up for the StationX Master's Program. Our program teaches you the skills needed for your ideal cyber security career, and connects you with mentors, our career toolkit, and a custom study roadmap to get you there faster.

If you enjoyed this content and want to learn more about AI and cyber security, see some of our Information Security Course Bundles below, granting lifetime access to top-rated courses.